You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

[MMO] Centrifugo

- Seller kick

- Creation date

- Tags centrifugo mmo

Integration with scalable real-time messaging server.

Overview Feature list Copyright info License agreement FAQ Releases (6)

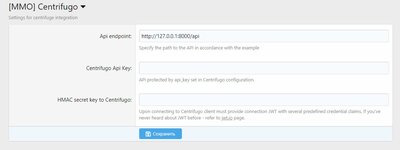

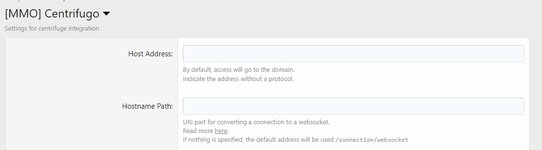

Adds integration with Centrifuge.

Centrifugo is a self-hosted service which can handle connections over a variety of real-time transports and provides a simple publish API. Centrifugo integrates well with any application – no need to change an existing application architecture to introduce real-time features. Just let Centrifugo deal with persistent connections.

Great performance

Centrifugo is built in Go language with some smart optimizations inside. It has good performance – test stand with one million WebSocket connections and 30 million delivered messages per minute with hardware comparable to one modern server machine.

Feature-rich

Many built-in features can help to build an attractive real-time application in a limited time. Centrifugo provides different types of subscriptions, hot channel history, instant presence, RPC calls. There is also the possibility to proxy connection events to the application backend over HTTP or GRPC and more.

Built-in Redis, KeyDB, Tarantool engines, or Nats broker make it possible to scale connections over different machines. With consistent sharding of Redis, KeyDB, and Tarantool it's possible to handle millions of active connections with reasonable hardware requirements.

Used in production

Started almost 10 years back then Centrifugo (and Centrifuge library for Go it's built on top of) is a mature server successfully used in production by many companies around the world: Badoo, Ably, ManyChat, Grafana, and others.

What is real-time messaging?

Real-time messaging can help building interactive applications where events can be delivered to users almost immediately after being acknowledged by application backend by pushing data into persistent connection – thus achieving minimal delivery latency.

Chats, live comments, multiplayer games, streaming metrics can be built on top of a real-time messaging system.

Centrifugo handles persistent connections from clients over bidirectional WebSocket, SockJS, and unidirectional SSE (EventSource), HTTP-streaming, GRPC transports and provides API to publish messages to online clients in real-time.

Scalability

Another important thing is scalability. As your application grows — more and more users will establish persistent connections with your real-time endpoint. A modern server machine can handle thousands of open connections but the power of one process is limited — you will eventually run out of available CPU or memory. So at some point you may have to scale user connections over several machines. Another reason to scale connections over several machines is high availability (when one servers out of order).

There are many real-time messaging solutions on Github and paid online services. But only few of them provide scalability out of the box — most of them work only in one process. I don’t want to say that Centrifugo is the only server that scales. There are still many alternatives like Socket.IO, SocketCluster, Pushpin and tons of others. My point is that possibility to scale is one of the main things you should think about when searching for real-time solution or building it from scratch. You can’t really predict how fast your app will run out available resources on single machine — software scalability is not a premature optimization and in most cases having scalable solution out of the box will simply give you more room for improving application functionality.

Many online services are capable to scale too. But look at pricing — most of those solutions are rather expensive. In case of pusher.com you are paying 500$ in a month but only get 10k connections max and strongly limited amount of monthly messages you should care about. This is ridiculous. Of course Centrifugo is self hosted and you must spend your server’s capacity to run it. But I suppose the cost is not comparable in many cases.

Centrifugo scales well with Redis PUB/SUB, supports application-side Redis consistent sharding out of the box and integrates with Redis Sentinel for high availability. We served up to 500k connections with Centrifugo having 10 Centrifugo node pods for connections in Kubernetes and only one Redis instance which consumed only 60% of single processor core!

There is also an ongoing pull request that adds possibility to scale PUB/SUB with Nats server as broker.

Centrifugo is a self-hosted service which can handle connections over a variety of real-time transports and provides a simple publish API. Centrifugo integrates well with any application – no need to change an existing application architecture to introduce real-time features. Just let Centrifugo deal with persistent connections.

Great performance

Centrifugo is built in Go language with some smart optimizations inside. It has good performance – test stand with one million WebSocket connections and 30 million delivered messages per minute with hardware comparable to one modern server machine.

Feature-rich

Many built-in features can help to build an attractive real-time application in a limited time. Centrifugo provides different types of subscriptions, hot channel history, instant presence, RPC calls. There is also the possibility to proxy connection events to the application backend over HTTP or GRPC and more.

Built-in Redis, KeyDB, Tarantool engines, or Nats broker make it possible to scale connections over different machines. With consistent sharding of Redis, KeyDB, and Tarantool it's possible to handle millions of active connections with reasonable hardware requirements.

Used in production

Started almost 10 years back then Centrifugo (and Centrifuge library for Go it's built on top of) is a mature server successfully used in production by many companies around the world: Badoo, Ably, ManyChat, Grafana, and others.

What is real-time messaging?

Real-time messaging can help building interactive applications where events can be delivered to users almost immediately after being acknowledged by application backend by pushing data into persistent connection – thus achieving minimal delivery latency.

Chats, live comments, multiplayer games, streaming metrics can be built on top of a real-time messaging system.

Centrifugo handles persistent connections from clients over bidirectional WebSocket, SockJS, and unidirectional SSE (EventSource), HTTP-streaming, GRPC transports and provides API to publish messages to online clients in real-time.

Scalability

Another important thing is scalability. As your application grows — more and more users will establish persistent connections with your real-time endpoint. A modern server machine can handle thousands of open connections but the power of one process is limited — you will eventually run out of available CPU or memory. So at some point you may have to scale user connections over several machines. Another reason to scale connections over several machines is high availability (when one servers out of order).

There are many real-time messaging solutions on Github and paid online services. But only few of them provide scalability out of the box — most of them work only in one process. I don’t want to say that Centrifugo is the only server that scales. There are still many alternatives like Socket.IO, SocketCluster, Pushpin and tons of others. My point is that possibility to scale is one of the main things you should think about when searching for real-time solution or building it from scratch. You can’t really predict how fast your app will run out available resources on single machine — software scalability is not a premature optimization and in most cases having scalable solution out of the box will simply give you more room for improving application functionality.

Many online services are capable to scale too. But look at pricing — most of those solutions are rather expensive. In case of pusher.com you are paying 500$ in a month but only get 10k connections max and strongly limited amount of monthly messages you should care about. This is ridiculous. Of course Centrifugo is self hosted and you must spend your server’s capacity to run it. But I suppose the cost is not comparable in many cases.

Centrifugo scales well with Redis PUB/SUB, supports application-side Redis consistent sharding out of the box and integrates with Redis Sentinel for high availability. We served up to 500k connections with Centrifugo having 10 Centrifugo node pods for connections in Kubernetes and only one Redis instance which consumed only 60% of single processor core!

There is also an ongoing pull request that adds possibility to scale PUB/SUB with Nats server as broker.